Words matter. Images matter. The Scientific Inquirer needs your support. Help us pay our contributors for their hard work. Visit our Patreon page and discover ways that you can make a difference. http://bit.ly/2jjiagi

Diabetes is one of the leading causes of death around the world. Nearly a half billion people suffer from some form of the disease (422 million as of 2014). Many of those people rely on insulin to keep them alive. Every day an injection in the thigh or the arm or even the abdomen is part of their routine. At a different point in history, it would have been impossible to supply them. That was when humans injected animal insulin and accepted whatever unwanted side effects that accompanied introducing foreign proteins into the body. It was also before advances in modern genetics created a new and controversial industry.

The history of therapeutic insulin begins in 1921 when Frederick Banding and Charles Best isolated the hormone from a dog’s pancreas. The researchers named their discovery after the area responsible for its production, the islets of Langerhans. They’d later go on to show that it could be used to alleviate the symptoms of Type 1 diabetes. In 1955, Frederick Sanger determined that the hormone is composed of 51 amino acids. According to Leigh Rivers and Eva Fauzon, “The molecule is an archetype for the so-called replacement therapy category of biologics, in that it is introduced into the body to correct a physiological deficiency.”

When insulin was first given therapeutically, production involved harvesting the hormone from the pancreas of pigs and cattle. While it worked well enough for a good number of patients, it was far from ideal. The treatment had a serious short coming. Since it came from animals, the insulin was often identified by human immune systems as foreign, causing allergic reactions. A better way forward was needed.

Enter Herbert Boyer and Stanley Cohen and Robert Swanson.

In 1973, Boyer and Cohen were colleagues at the University of California, San Francisco. Together with Paul Berg, they discovered how to use restriction enzymes to essentially cut and paste genes of their choosing into strands of DNA. The newly formed DNA could then be coaxed into expressing the genes they had inserted. The new technology, called recombinant DNA, ushered in a new era.

When a venture capitalist named Robert Swanson heard of their discovery, he called Boyer and requested a meeting. The wary scientist replied that he would only give 10 minutes of his time. The meeting lasted 3 hours. Swanson convinced Boyer of the commercial viability of recombinant DNA. By the time the meeting ended, the world’s first biotechnology company, Genentech, was born.

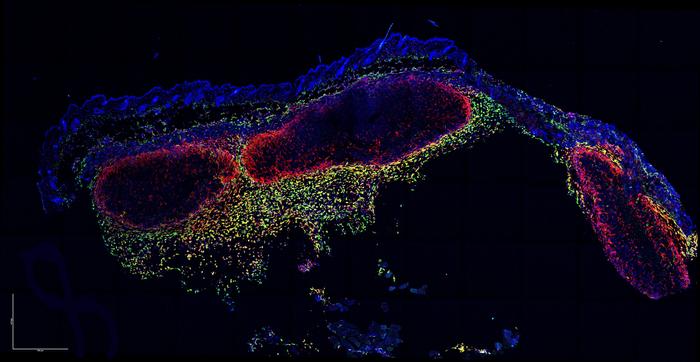

A team at Genentech isolated the human genes responsible for insulin production in the pancreas. The tiny stretch of DNA was then inserted into a plasmid, the circular bit of stand alone DNA bacteria often use to exchange genes. The hybrid was then inserted an Escherichia coli then allowed to replicate. When all was said and done, the bacteria had been transformed into microscopic insulin factories.

The new biosynthetic insulin faced considerable skepticism from the academic and business community. It wasn’t until 1982 that it made it onto the market. Eli Lily licensed the insulin, also taking charge of marketing responsibilities. They called it Humulin R.

Interestingly, news coverage of the first genetically modified product did not question its safety, readily accepting the speed in which Humulin won FDA approval.

According to the New York Times, “In the remarkably short time of five months, the Food and Drug Administration last week gave Eli Lilly and Company the right to manufacture insulin using gene-splicing techniques. The new product – Humulin – should be available without a prescription sometime in the next year.”

According to the report, the hormone was tested on 400 patients in 12 medical centers. That’s all. The very limited clinical trial was considered robust enough. The reasons for its speedy approval may sound familiar,

“The F.D.A.’s action follows by one month approval by British officials of Humulin for use in Britain. The reason for the speedy action in the United States, despite the newness of the product, was attributed to ‘the vast amount of prior experience with the animal insulins, which are very closely related to’ Humulin.”

That rationale would be echoed a decade later by the Organization for Economic Cooperation and Development (OECD) when it introduced the notion of substantial equivalence.

*

After the OECD established the role of substantial equivalence in the genetically modified crop evaluation process in 1993, other organizations followed their lead, rubber stamping the concept. Testing new varieties was fairly straightforward, perhaps to the point of being reductive. Still, it provided a degree of clarity.

According to Fred Gould, William Neal Reynolds Professor of Agriculture at NC State, “Substantial equivalence is a concept that is based on the assumption that if food from a genetically engineered variety is compared to the same food from a non-engineered variety and they have the same biochemical makeup, then they are equivalent, so the GE food is considered safe.”

Clearly, the design of substantial equivalence favored the biotechnology and agricultural industry.

The Food and Agriculture Organization of the United Nations and the World Health Organization chimed in through their Codex Alimentarus Commission, a joint effort with the goal of developing food standards, guidelines, codes of practice, and other relevant documents under the FAO-WHO Food Standards Programme. According to their guidelines for assessing GM crops,

“The concept of substantial equivalence is a key step in the safety assessment process. However, it is not a safety assessment in itself; rather it represents the starting point which is used to structure the safety assessment of a new food relative to its conventional counterpart. This concept is used to identify similarities and differences between the new food and its conventional counterpart. It aids in the identification of potential safety and nutritional issues and is considered the most appropriate strategy to date for safety assessment of foods derived from recombinant-DNA plants. The safety assessment carried out in this way does not imply absolute safety of the new product; rather, it focuses on assessing the safety of any identified differences so that the safety of the new product can be considered relative to its conventional counterpart. ”

The process of establishing substantial equivalence of a GM crop involved two key steps. Rather than attempting to catalog and match every chemical comprising the variety, the regulators trimmed the checklist down to important components, assessing for agronomic, morphological and chemical characteristics, such as macro- and micro-nutrients, and toxic molecules. If a considerable difference was spotted, further tests would be performed.

Kent Bradford, Distinguished Professor in the Department of Plant Sciences at the University of California, Davis, explains, “The concept mainly involves the constituents of the food and whether they are within the range of normal variation for non-GM equivalent plants or foods. It also involves assessing what the change in the plant involves and whether it has a reasonable likelihood of changing anything relevant to food safety.”

This so-called targeted approach had a number of advantages. It took advantage of existing knowledge of specific crops. In doing so, it reduced the time it took to bring the GM crop to the market. It also lowered the overall production cost and, by extension, allowed the commercial product to be priced competitively. Longer, more detailed testing would incur significant costs. Clearly, the design of substantial equivalence favored the biotechnology and agricultural industry.

During the late 1990s and early 2000s, scientific journals published a flurry of papers wary of genetically modified foods and substantial equivalence. Their sentiment was subsequently reflected in the public discourse around GMOs, a debate that quickly devolved into hysterics.

One paper, published in the influential journal Nature, sharply criticized the use of substantial equivalence by the scientific community. Erik Millstone, Eric Brunner, and Sue Mayer argued that relying on a GM food’s chemical components as a guide to whether it is safe to eat was inadequate. In particular, they took issue with the targeted approach which they characterized as randomly chosen.

According to Millstone et al., “scientists are not yet able reliably to predict the biochemical or toxicological effects of a GM food from a knowledge of its chemical composition.”

The utility of inadequate technology was worsened by the fact that a standardized definition of substantial equivalence had yet to be established.

Millstone et al. insisted that substantial equivalence failed to take into account how GM crops change over the course of production. They cited glyphosate-tolerant soy beans as an example. According to the group, “Although we have known for about 10 years that the application of glyphosate to soya beans significantly changes their chemical composition (for example the level of phenolic compounds such as isoflavones), the GTSBs on which the compositional tests were conducted were grown without the application of glyphosate… If the GTSBs had been treated with glyphosate before their composition was analysed it would have been harder to sustain their claim to substantial equivalence.”

The paper concluded with polemical firebombs

“Substantial equivalence is a pseudo-scientific concept because it is a commercial and political judgement masquerading as if it were scientific. It is, moreover, inherently anti-scientific because it was created primarilly to provide an excuse for not requiring biochemical or toxicological tests. It therefore serves to discourage and inhibit potentially informative scientific research.”

The popular media — never really one for nuance — pounced on the idea that GMOs were harmful, or at least potentially harmful. The term ‘Frankenfood’ was born.

The response to Millstone et al.’s commentary was swift (at least from biotech industry defenders and members of the OECD). Nature published responses to the paper.

Anthony Trewavas and C.J. Leaver offered a rebuttal to the argument that it was impossible to deduce any potential toxicity from individual components. They wrote that

“Using the logic of Millstone et al., every new crop seed variety would have to be separately tested for toxicity when it has been treated with every herbicide, every pesticide, fertilizer variations, attack by every individual predator, infection with every individual disease and grown in an astronomically large number of different environmental combinations. We would be drowning in toxicity tests.”

Peter Kearns, a member of the OECD, and Paul Mayers, a member of Health Canada, took exception to Millstone’s “psuedo-science” accusation directly. They pointed out that the experts assembled by the OECD consisted of regulatory scientists and consumer safety experts from around the world. They tackled the question of substantial equivalence in no certain terms

“Substantial equivalence is not a substitute for a safety assessment. It is a guiding principle which is a useful tool for regulatory scientists engaged in safety assessments… In this approach, differences may be identified for further scrutiny, which can involve nutritional, toxicological and immunological testing.”

How has substantial equivalence panned out? From appearances, so far so good. There haven’t been waves of cancer outbreaks across the globe.

Kearns and Mayers’ argument represented the least controversial argument for and against substantial equivalence, though it wasn’t really an argument so much as an acknowledgment of the process’ original intended use and limitations.

Substantial equivalence was never meant to function as proof of safety and the OECD clearly stated that substantial equivalence was not designed to replace safety testing. It was designed to be the first step in deciding whether further investigation was needed. The problem, according to critics, was that the agricultural industry had made substantial equivalence the start and end point all rolled into one. In other words, the concept had been abused.

Papers continued to question substantial equivalence and the mood of resignation spilled over into the public discourse, spawning a number of anti-GMO groups. The popular media — never really one for nuance — pounced on the idea that GMOs were harmful, or at least potentially harmful. The term ‘Frankenfood’ was born. (Nevermind the fact that the creature in Shelley’s novel was only a monster in the eyes of society and ended up being a reflection of their ignorance and prejudice. Again, nuance.)

One phrase in particular would become the rallying cry against substantial equivalence and the multinational corporations it supposedly benefited.

Pseudo-scientific.

It proved to be a rhetorical presage of President Donald Trump’s relentless invocation of the dismissive phrase, “fake news.”

While the initial tag as used by Millstone at al. may have been grounded in research or scientific intuition, it was picked up by anti-GMO activists and employed in purely political terms. It was and continues to be the buzzword used to stifle debate.

Unfortunately, that is exactly what’s not needed because GM foods are not going away and a good-faith discussion is exactly what is needed as the field moves forward.

*

Nearly thirty years has passed since the OECD published “The Safety Evaluation of Foods Derived by Modern Technology – concepts and principles.” Roughly thirty-two genetically modified crops have passed the approval process in countries around the world. Everything from cowpeas to plums to wheat have made the cut, each crop deemed safe for consumption (or at least safe to use in the case of cotton). Yet, the notion of safety appears to begin and end within a nation’s border. A consistent, internationally agreed upon quantitative standard has yet to emerge, muddying waters unnecessarily.

How has substantial equivalence panned out? From appearances, so far so good. There haven’t been waves of cancer outbreaks across the globe. People aren’t walking around with massive tumors under their collars or bulging out of their abdomens. The human equivalent of Seralini’s hideous mice aren’t dropping dead as they walk out of McDonalds or Shake Shack. But that is admittedly anecdotal so understanding the long term effects of GM food consumption still has ways to go.

Kent Bradford insists that how components interact within the human body shouldn’t be a cause for concern.

“When we eat a salad or soup, the resulting mix in your stomach is quite complicated biochemically,” he says. “Does that imply that it is somehow more unsafe than the individual ingredients that were used to make the soup or salad? There can be biochemical interactions among food ingredients, but this is why a ‘substantial equivalence’ standard is used rather than a specific biochemical standard. We don’t have that type of detailed biochemical data on any type of food, so the comparison is with foods that are and have been eaten without indication of problems (also called Generally Recognized As Safe, or GRAS status), which includes most whole foods.”

Still, there may be room for improvement.

“At this point, the required testing involves about 60 components,” says Fred Gould. “This sounds like a lot but plants have hundreds of components that could differ. There is a question of whether more components should be tested.”

For his part, Erik Millstone, Emeritus Professor of Science Policy at the University of Sussex, continues to harbor doubts about the notion that components can be used as an effective indicator of potential toxicity.

“A fundamental assumption of this approach is that the functioning of organisms can be fully deduced from the functions of their separate parts,” he says. “This approach tries to reduce complexity to something that the panel can manage, but the results of this do not reflect the realities they purport to assess; they fail to acknowledge the true levels of complexities, uncertainties and gaps in knowledge, with the result that harmful consequences may be overlooked.”

Ultimately, Bradford offers a different view, one that extends beyond genetically modified crops and frames the issue differently. According to him, “Food safety issues are almost never the result of toxicity in the foods themselves, except for allergenicity where it is clearly the case. Thus, the system tests for known allergens and increasingly uses modeling to predict whether a certain protein has a likelihood of being allergenic. Toxins have largely been eliminated from our domesticated crops.”

*

Whether by inspired intuition or dumb luck, Genentech and Eli Lilly’s Humulin R proved to be a blockbuster. It was a game changer the way Mevacor was for Merck. Its offshoots, including Humalog, have proven safe and effective. In 2008, Eli Lilly reported collective sales of $28 billion.

Will the same hold true for genetically modified foods?

WORDS: Marc Landas

SOURCES: An introduction to biologics and biosimilars. Part I: Biologics:What are they and where do they come from? CPI/RPC; The New York Times; Nature; Toxicology; Pharmacy Times; Biologics: A history of agents made from living organisms in the Twentieth Century; OECD; The Safety Evaluation of Foods Derived by Modern Technology – concepts and principles; ISAAA.

IMAGE SOURCE: Creative Commons