Think about what you do with your hands when you’re home at night pushing buttons on your TV’s remote control, or at a restaurant using all kinds of cutlery and glassware. These skills are all based on touch, while you’re watching a TV program or choosing something from the menu. Our hands and fingers are incredibly skilled mechanisms, and highly sensitive to boot.

Robotics researchers have long been trying to create “true” dexterity in robot hands, but the goal has been frustratingly elusive. Robot grippers and suction cups can pick and place items, but more dexterous tasks such as assembly, insertion, reorientation, packaging, etc. have remained in the realm of human manipulation. However, spurred by advances in both sensing technology and machine-learning techniques to process the sensed data, the field of robotic manipulation is changing very rapidly.

Highly dexterous robot hand even works in the dark

If you enjoy the content we create and would like to support us, please consider becoming a patron on Patreon! By joining our community, you’ll gain access to exclusive perks such as early access to our latest content, behind-the-scenes updates, and the ability to submit questions and suggest topics for us to cover. Your support will enable us to continue creating high-quality content and reach a wider audience.

Join us on Patreon today and let’s work together to create more amazing content! https://www.patreon.com/ScientificInquirer

Researchers at Columbia Engineering have demonstrated a highly dexterous robot hand, one that combines an advanced sense of touch with motor learning algorithms in order to achieve a high level of dexterity.

As a demonstration of skill, the team chose a difficult manipulation task: executing an arbitrarily large rotation of an unevenly shaped grasped object in hand while always maintaining the object in a stable, secure hold. This is a very difficult task because it requires constant repositioning of a subset of fingers, while the other fingers have to keep the object stable. Not only was the hand able to perform this task, but it also did it without any visual feedback whatsoever, based solely on touch sensing.

In addition to the new levels of dexterity, the hand worked without any external cameras, so it’s immune to lighting, occlusion, or similar issues. And the fact that the hand does not rely on vision to manipulate objects means that it can do so in very difficult lighting conditions that would confuse vision-based algorithms–it can even operate in the dark.

“While our demonstration was on a proof-of-concept task, meant to illustrate the capabilities of the hand, we believe that this level of dexterity will open up entirely new applications for robotic manipulation in the real world,” said Matei Ciocarlie, associate professor in the Departments of Mechanical Engineering and Computer Science. “Some of the more immediate uses might be in logistics and material handling, helping ease up supply chain problems like the ones that have plagued our economy in recent years, and in advanced manufacturing and assembly in factories.”

Leveraging optics-based tactile fingers

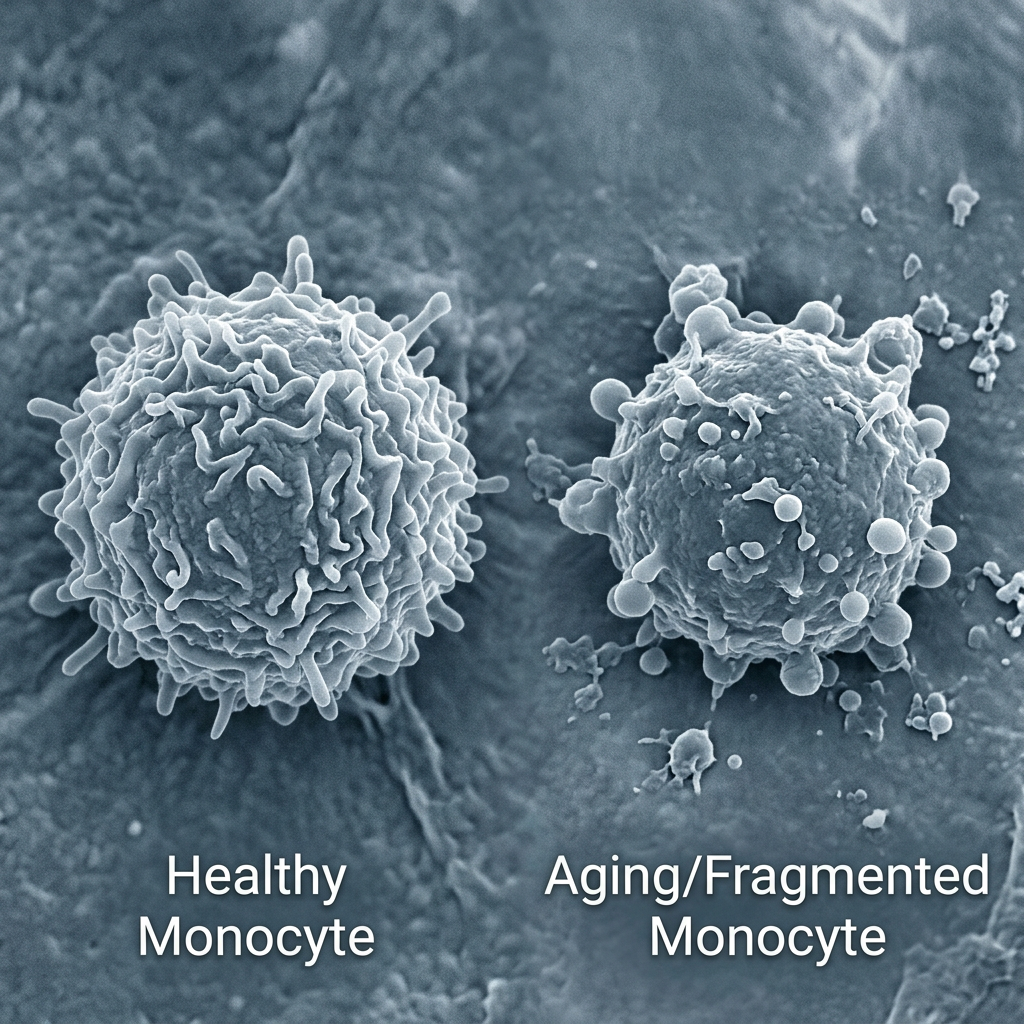

In earlier work, Ciocarlie’s group collaborated with Ioannis Kymissis, professor of electrical engineering, to develop a new generation of optics-based tactile robot fingers. These were the first robot fingers to achieve contact localization with sub-millimeter precision while providing complete coverage of a complex multi-curved surface. In addition, the compact packaging and low wire count of the fingers allowed for easy integration into complete robot hands.

Teaching the hand to perform complex tasks

For this new work, led by CIocarlie’s doctoral researcher, Gagan Khandate, the researchers designed and built a robot hand with five fingers and 15 independently actuated joints–each finger was equipped with the team’s touch-sensing technology. The next step was to test the ability of the tactile hand to perform complex manipulation tasks. To do this, they used new methods for motor learning, or the ability of a robot to learn new physical tasks via practice. In particular, they used a method called deep reinforcement learning, augmented with new algorithms that they developed for effective exploration of possible motor strategies.

Robot completed approximately one year of practice in only hours of real-time

The input to the motor learning algorithms consisted exclusively of the team’s tactile and proprioceptive data, without any vision. Using simulation as a training ground, the robot completed approximately one year of practice in only hours of real-time, thanks to modern physics simulators and highly parallel processors. The researchers then transferred this manipulation skill trained in simulation to the real robot hand, which was able to achieve the level of dexterity the team was hoping for. Ciocarlie noted that “the directional goal for the field remains assistive robotics in the home, the ultimate proving ground for real dexterity. In this study, we’ve shown that robot hands can also be highly dexterous based on touch sensing alone. Once we also add visual feedback into the mix along with touch, we hope to be able to achieve even more dexterity, and one day start approaching the replication of the human hand.”

Ultimate goal: joining abstract intelligence with embodied intelligence

Ultimately, Ciocarlie observed, a physical robot being useful in the real world needs both abstract, semantic intelligence (to understand conceptually how the world works), and embodied intelligence (the skill to physically interact with the world). Large language models such as OpenAI’s GPT-4 or Google’s PALM aim to provide the former, while dexterity in manipulation as achieved in this study represents complementary advances in the latter.

For instance, when asked how to make a sandwich, ChatGPT will type out a step-by-step plan in response, but it takes a dexterous robot to take that plan and actually make the sandwich. In the same way, researchers hope that physically skilled robots will be able to take semantic intelligence out of the purely virtual world of the Internet, and put it to good use on real-world physical tasks, perhaps even in our homes.

IMAGE CREDIT: Columbia University ROAM Lab

Leave a Reply